Understanding Inconclusive Results

Inconclusive results are due to a performance test being unable to reach statistical significance after a full set of test iterations.

Recall from Peformance overview, Emerge runs numerous iterations of a performance test against a base and head app, controlling for noise (using network replay, disk restore, and other methods) while performing statistical calculations to determine with 99% confidence if an app's performance changed between the two builds.

In the case of inconclusive conclusions, despite Emerge's variance control, confirming a performance change wasn't possible, usually due to behavior changes in an app's code or other sources of variance.

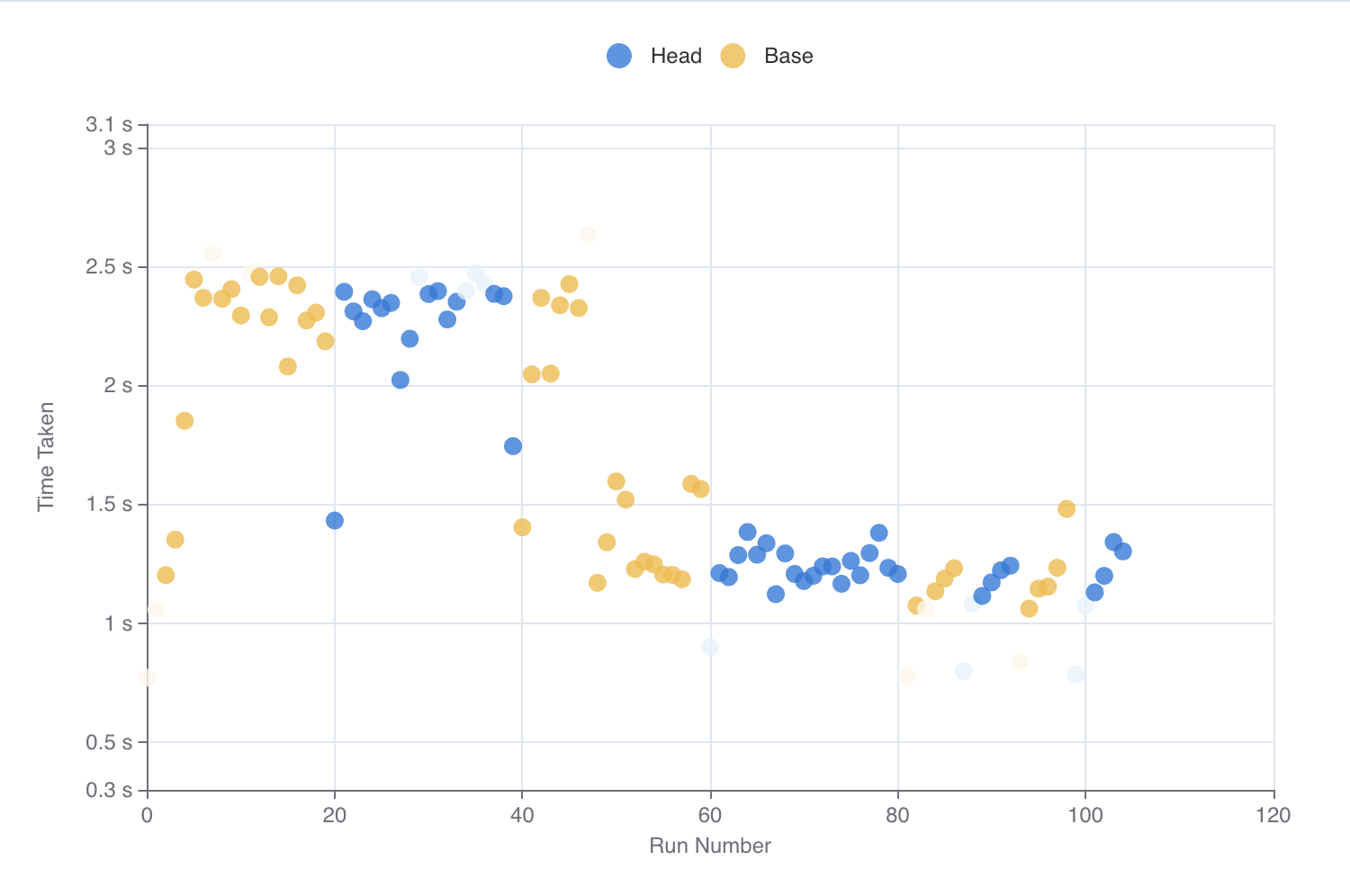

An example of an inconclusive performance test run. After ~100 iterations, Emerge could not reach statistically significant results. Notice that around iteration 45, the measured time taken for each test drops by ~1s, preventing Emerge from being able to confidently detect a change.

Common causes of Inconclusive results

Inconclusive results are caused by many reasons, but most commonly issues arise from app code behaving differently across iterations or particularly on Android, test code introducing variance between iterations.

If you're having trouble understanding Inconclusive results, let the Emerge team know and we can dive in!

App code variance & non-deterministic behavior

The most common source of inconclusive results is due to an app's code behaving differently across iterations. This is often due to sources of noise, like cache expirations, multithreaded scheduling and other factors that lead to differing behavior across iterations.

Emerge recommends measuring code and flows that are easily repeatable. Additionally, using spans on iOS and Android can better focus performance measurements on specific sections of app code, which helps to reduce the scope of code being measured, limiting the effects of sources of noise.

Test code variance

Test code is also a common source of variance, particularly on Android. On Android, if spans are not used, by default Emerge will measure the full duration of the test code. If intentional waits or assertions are added to ensure views are present, this adds downtime to tests that can be significantly different across test runs.

Similar to app code variance above, Emerge highly recommends using spans on iOS and Android to better focus performance measurements on specific sections of app code, rather than measuring test code.

Updated 4 months ago