Absolute vs. Relative Timing

When we think about performance, we generally think about the amount of time that a user is waiting in seconds. We might look at the p50 time in production, and how it goes, for example, from 0.7s to 0.6s.

However, for performance testing, it's more common to think in terms of percentage change. A PR might be measured to increase startup time by 4.5%, for example. We use percentages because there's typically a very high range in performance between devices (the p99 time can be >3x the p50 time). Even between two phones of the same model, one can be half as fast as the other due to its age, CPU throttling, other processes running on the phone, etc. You might run a performance test that gets assigned to a fast phone and see a small regression as measured in seconds, then re-run it and see a larger regression for the same change on a slower phone.

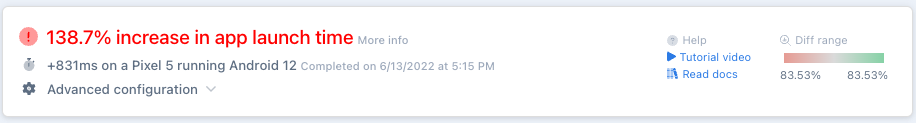

An example of a relative performance change Emerge will display

Generally, it's best to define your startup endpoint for performance testing at the same point that it's measured in production, and then percentage changes can be multiplied, roughly speaking, by the p50 time to understand the impact on p50 users, by the p75 time to understand it for p75 users, etc. . We do still provide absolute times when looking at the flamegraph for each stack frame, and the information is not useless, but it has to be understood in context.

Updated 4 months ago